Figure's humanoids read each other's minds making beds

PLUS: rice-sized touch sensor for robots, NVIDIA open-sources humanoid training stack, and Tokyo's fully unmanned robot laboratory

Welcome back to your Robot Briefing

Figure AI just showed two humanoid robots tidying a bedroom together without any direct communication—they coordinated purely by watching each other through their cameras. It's the first time a single neural network has managed multi-robot collaboration straight from visual input to action.

This isn't about robots following a script. The real question for manufacturers and logistics operators: if robots can coordinate by observation alone, does that eliminate the integration headache of getting different systems to talk to each other?

In today's Robot update:

Figure's robots learn to read each other's minds while making your bed

Snapshot: Figure AI demonstrated two humanoid robots tidying a bedroom by coordinating purely through visual observation—no wireless messaging, no shared control system—marking the first single neural network to handle multi-robot collaboration directly from camera input to physical action. This moves beyond choreographed demos into autonomous collaboration that mirrors how human workers naturally coordinate.

Breakdown:

Takeaway: The shift from centralized control to visual coordination matters because it dramatically simplifies deployment—operators won't need custom integration for each multi-robot setup. Companies evaluating warehouse or logistics automation should note this makes scaling from one robot to many operationally simpler, though Figure hasn't disclosed pricing or commercial availability timelines for multi-robot deployments.

Rice-sized sensor gives robots human-like sense of touch

Snapshot: Researchers at Shanghai Jiao Tong University have developed a 1.7mm optical sensor that measures force, pressure, and torque in all directions using light patterns instead of electronics, enabling surgical robots to detect unsafe contact levels and identify hidden structures like tumors beneath tissue. The sensor is small enough to fit inside miniature surgical tools where current force-sensing technology physically cannot.

Breakdown:

Takeaway: Medical device companies should track this because current robotic surgery platforms lack real-time force feedback in confined spaces, creating liability exposure and limiting procedure types. The sensor's ability to detect unsafe contact early and adjust actions instantly could expand the range of minimally invasive procedures robots can safely perform, though commercialization timeline and regulatory pathway remain unclear.

NVIDIA open-sources full training stack for humanoid whole-body control

Snapshot: NVIDIA released the complete SONIC package including data collection tools, GR00T VLA post-training code, and inference implementation, enabling developers to train autonomous whole-body coordination policies on humanoid platforms like the Unitree G1. This removes the proprietary barrier that previously made humanoid AI development accessible only to well-funded robotics companies.

Breakdown:

Takeaway: This matters for automation planning because it dramatically lowers the technical barrier and cost for companies to experiment with humanoid applications beyond standard industrial arms. Operations leaders exploring custom automation should note this makes proof-of-concept development feasible without hiring specialized robotics AI teams, though hardware costs and deployment complexity remain significant hurdles.

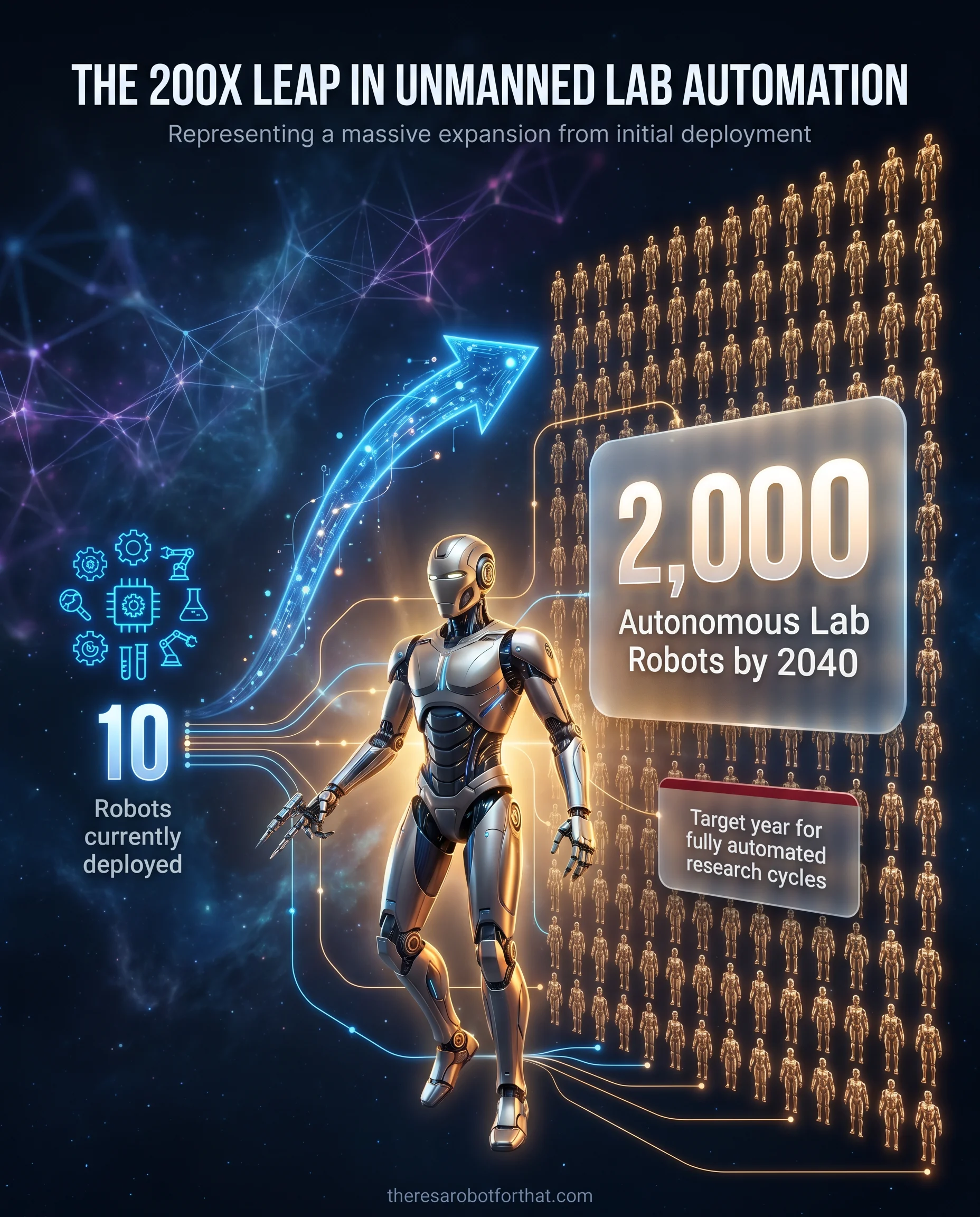

Tokyo university opens fully unmanned lab run by 10 research robots

Image Source: There's A Robot For That

Snapshot: Institute of Science Tokyo launched the Robotics Innovation Center where humanoid robots autonomously conduct medical experiments including cell cultivation and reagent handling without human staff present, with plans to expand from 10 robots today to 2,000 by 2040 for nearly complete research automation. The facility demonstrates operational viability of fully unmanned technical workflows rather than human-supervised automation.

Breakdown:

Takeaway: The 200x expansion plan from 10 to 2,000 robots signals confidence in operational reliability and economic viability that extends beyond pilot programs. Companies with laboratory operations, quality control testing, or other repetitive technical procedures should note that fully unmanned operation is now demonstrated reality rather than future concept, though the financial model and ROI timeline for the Tokyo deployment remain undisclosed.

Other Top Robot Stories

Daedong targets agricultural physical AI and autonomous equipment as part of a value-up plan aiming for $2.6 billion in sales by 2030, expanding its North American and European dealer network to 1,700 locations while shifting 25.9% of revenue to subscription-based agricultural services.

Neptune reported zero adverse events and 100% cecal intubation in a 50-patient first-in-human study of its Triton robotic endoscopy system, signaling momentum in gastrointestinal robotics as the sector moves beyond flagship surgical systems into specialist procedure applications.

Lotte launched a 7.25 billion won government-backed consortium with KAIST, Yonsei and Inha universities to train master's and doctoral-level physical AI specialists through 2029, while deploying humanoid robots in its AI LAB 3.0 convenience store pilot with Korea Seven.

GSMA announced the Humanoid Robot Football Penalties Challenge at MWC26 Shanghai running June 24-26, with international teams competing in penalty kicks to demonstrate real-time decision-making, motion control and precision in embodied intelligence systems.

🤖 Your robotics thought for today:

Figure's bedroom demo isn't about making beds. It's about deployment math. If robots coordinate by watching instead of messaging, you skip the systems integration step that kills most multi-robot projects. That's the difference between a six-month install and a six-week one.

I'm watching who ships this commercially first.

Until Wednesday,

Uli